Guests

- Emma Briantvisiting research associate in human rights at Bard College who specializes in researching propaganda. Her upcoming book is titled Propaganda Machine: Inside Cambridge Analytica and the Digital Influence Industry.

- Brittany Kaisera Cambridge Analytica whistleblower who is featured in the documentary The Great Hack. Her book is titled Targeted: The Cambridge Analytica Whistleblower’s Inside Story of How Big Data, Trump, and Facebook Broke Democracy and How It Can Happen Again.

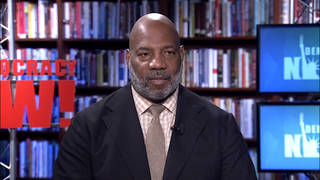

- Karim Amerco-director of The Great Hack, which has been shortlisted for an Academy Award.

- Jehane Noujaimco-director of The Great Hack, which has been shortlisted for an Academy Award. Her previous films include The Square and Control Room.

As new details emerge about how the shadowy data firm Cambridge Analytica worked to manipulate voters across the globe, we continue our look at the documentary, “The Great Hack.” We speak to Cambridge Analytica whistleblower Brittany Kaiser, the film’s co-directors Jehane Noujaim and Karim Amer, and the propaganda researcher Emma Briant.

More from this Interview

- Part 1: Meet Brittany Kaiser, Cambridge Analytica Whistleblower Releasing Troves of New Files from Data Firm

- Part 2: “The Great Hack”: Big Data Firms Helped Sway the 2016 Election. Could It Happen Again in 2020?

- Part 3: Propaganda Machine: The Military Roots of Cambridge Analytica’s Psychological Manipulation of Voters

- Part 4: Facebook Is a Crime Scene: “The Great Hack” Documentary Details Big Data’s Threat to Democracy

- Part 5: “Democracy For Sale”: Cambridge Analytica & Big Tech’s History of Manipulating Elections

- Part 6: The Weaponization of Data: Cambridge Analytica, Information Warfare & the 2016 Election of Trump

Transcript

AMY GOODMAN: This is Democracy Now!, democracynow.org, The War and Peace Report. I’m Amy Goodman.

DAVID CARROLL: All of your interactions, your credit card swipes, web searches, locations, likes, they’re all collected, in real time, into a trillion-dollar-a-year industry.

CAROLE CADWALLADR: The real game changer was Cambridge Analytica. They worked for the Trump campaign and for the Brexit campaign. They started using information warfare.

AMY GOODMAN: New details are emerging about how the shadowy data firm Cambridge Analytica worked to manipulate voters across the globe, from the 2016 election in the United States to the Brexit campaign in Britain.

We are continuing our look at the Oscar-shortlisted documentary The Great Hack, which chronicles the rise and fall of Cambridge Analytica. And we’re continuing with our four guests.

Jehane Noujaim and Karim Amer are the co-directors of The Great Hack, which was just nominated for a BAFTA — that’s the British equivalent of the Oscars — as well as made it to the Academy Award shortlist for documentaries. Jehane and Karim’s past film include The Square. Jehane was the director of Control Room.

Brittany Kaiser is also with us. She’s the Cambridge Analytica whistleblower who’s featured in the film. She has written the book Targeted: The Cambridge Analytica Whistleblower’s Inside Story of How Big Data, Trump, and Facebook Broke Democracy and How It Can Happen Again. She’s joining us in her first interview that she’s done after releasing a trove of documents on Cambridge Analytica’s involvement in elections around the world and other issues.

And we’re joined by Emma Briant, a visiting research associate in human rights at Bard College who specializes in researching propaganda. Her forthcoming book, Propaganda Machine: Inside Cambridge Analytica and the Digital Influence Industry.

We ended Part 1 of our discussion talking about the difference between polling and PSYOPs, or psychographics. So, Emma Briant, let’s begin with you in this segment of our broadcast. For people who aren’t aware of Cambridge Analytica, why you essentially became obsessed with it? And talk about the new level Cambridge Analytica and Facebook have brought manipulation to.

EMMA BRIANT: Absolutely. The scale of what they were doing around the world, just, you know, the incredible potential of these technologies, hit me like a ton of bricks in 2016, when we realized that they were messing around in our own elections. Now, of course, this had been a human rights issue around the world for years before that. And, you know, I think democracies had been complacent about the ways in which things that are being ignored in other places would also ripple into our own societies. We’ve been engaged in counterterrorism wars around the world and developing these technologies for deployment against terrorists in Middle Eastern contexts, for stabilization projects, for counterterrorism at home, as well. And these technologies were developed in ways that were not enclosed from further development and commercialization and adaptation into politics.

And one of the problems that I realized as I was digging deeper and deeper into the more subterranean depths of the influence industry is how underregulated it is, how even our defense departments are not aware of what these companies are doing, beyond the purposes that they are hiring them for a lot of the time. There are conflicts of interest that are just not being declared. And this is deeply dangerous, because we can’t have bad actors weaponizing these technologies against us.

And we’re also seeing the extension of inequality and modern imperialism in action, of course, where these big firms are being created out of, you know, our national security infrastructure, are then able to go in between those campaigns and work for other states, which, whether they’re allies or enemies, may not necessarily have our democracies’ interests at heart.

So, the power and the importance of this is deeply, deeply important. These technologies that they were developing are aggressive technologies. This isn’t just advertising as normal. This is a surveillant infrastructure of technology that is, you know, weaving its way through our everyday lives. Our entire social world is now data-driven.

And what we’re now seeing is these — we’ve seen, in parallel with that, the rise of these influence companies out of the “war on terror,” both, for instance, in Israel, as well as in the in the West, these companies being developed to try to combat terrorism, but also then those are able to be — you know, reused these technologies for commercial purposes. And a multibillion-dollar industry grew up with the availability of technology and data. And we have been sleepwalking through it, and suddenly woke up in the last few years.

The sad thing is that we haven’t had enough attention to what we do about this. We can’t just scare people. We can’t just advertise the tools of Cambridge Analytica so that the executives can form new companies and go off and do the same thing again.

What we need is action. And we need to be calling on everybody to be getting in touch with their legislators, as Brittany Kaiser has been arguing, as well. And we need to be shouting loudly to make sure that military contractors are being properly governed. Oversight, reporting of conflicts of interest must go beyond the individuals who are engaged in an operation. It has to go to networks of companies. They need to be declaring who else they’re working for and with.

And, you know, we also need to actually regulate the whole influence industry, so these companies, like PR companies, like — lobbying is already somewhat regulated. But we still have an awful lot of ability to use shell companies to cover up what’s actually happening. If we can’t see what’s happening, we’re doomed. We have to make sure that these firms are able to be transparent, that we know what’s going on and that we can respond and show that we’re protecting our democratic elections.

AMY GOODMAN: Let’s go to a clip from The Great Hack about how the story of Cambridge Analytica and what it did with Facebook first came to light. Carole Cadwalladr is a reporter who broke the Cambridge Analytica story.

CAROLE CADWALLADR: I started tracking down all these Cambridge Analytica ex-employees. And eventually, I got one guy who was prepared to talk to me: Chris Wylie. We had this first telephone call, which was insane. It was about eight hours long. And pooff!

CHRISTOPHER WYLIE: My name is Christopher Wylie. I’m a data scientist, and I help set up Cambridge Analytica. It’s incorrect to call Cambridge Analytica a purely sort of data science company or an algorithm company. You know, it is a full-service propaganda machine.

AMY GOODMAN: “A full-service propaganda machine,” Chris Wylie says. Before that, Carole Cadwalladr, which brings us to Brittany Kaiser, because when Chris Wylie testified in the British Parliament, and they were saying, “How do we understand exactly what Cambridge Analytica has done?” he said, “Talk to Brittany Kaiser,” who ultimately did also testify before the British Parliament. But talk about what Chris Wylie’s role was, Cambridge Analytica, how you got involved. I want to step back, and you take us step by step, especially for young people to understand how they can get enmeshed in something like this, and then how you end up at Trump’s inauguration party with the Mercers.

BRITTANY KAISER: I think it’s very important to note this, because there are people all around the world that are working for tech companies, that I’m sure joined that company in order to do something good. They want the world to be more connected. They want to use technology in order to communicate with everybody, to get people more engaged in important issues. And they don’t realize that while you’re moving fast and breaking things, some things get so broken that you cannot actually contemplate or predict what those repercussions are going to look like.

Chris Wylie and I both really idealistically joined Cambridge Analytica because we were excited about the potential of using data for exciting and good impact projects. Chris joined in 2013 on the data side in order to start developing different types of psychographic models. So he worked with Dr. Aleksandr Kogan and the Cambridge Psychometrics Centre at Cambridge University in order to start doing experiments with Facebook data, to be able to gather that data, which we now know was taken under the wrong auspices of academic research, and was then used in order to identify people’s psychographic groupings.

AMY GOODMAN: Now, explain that, psychographic groupings, and especially for people who are not on Facebook, who don’t understand its enormous power and the intimate knowledge it has of people. Think of someone you’re talking to who’s never experienced Facebook. Explain what is there.

BRITTANY KAISER: Absolutely. So, the amount of data that is collected about you on Facebook and on any of your devices is much more than you’re really made aware of. You probably haven’t ever read the terms and conditions of any of these apps on your phone. But if you actually took the time to do it and you could understand it, because most of them are written for you not to understand — it’s written in legalese — you would realize that you are giving away a lot more than you would have ever agreed to if there was transparency. This is your every move, everywhere you’re going, who you’re talking to, who your contacts are, what information you’re actually giving in other apps on your phone, your location data, all of your lifestyle, where you’re going, what you’re doing, what you’re reading, how long you spend looking at different images and websites.

This amount of behavioral data gives such a good picture of you that your behavior can be predicted, as Karim was talking about earlier, to a very high degree of accuracy. And this allows companies like Cambridge Analytica to understand how you see the world and what will motivate you to go and take an action — or, unfortunately, what will demotivate you. So, that amount of data, available on Facebook ever since you joined, allows a very easy platform for you to be targeted and manipulated.

And when I say “psychographic targeting,” I’m sure you probably are a little bit more familiar with the Myers-Briggs test, so the Myers-Briggs that asks you a set of questions in order to understand your personality and how you see the world. The system that Cambridge Analytica used is actually a lot more scientific. It’s called the OCEAN five-factor model. And OCEAN stands for O for openness, C for conscientiousness, whether you prefer plans and order or you’re a little bit more fly by the seat of your pants. Extraversion, whether you gather your energy from being out surrounded by people, or you’re introverted and you prefer to gather your energy from being alone. If you are agreeable, you care about your family, your community, society, your country, more than you care about yourself. And if you are disagreeable, then you are a little bit more egotistical. You need messages that are about benefits to you. And then the worst is neurotic. You know, it’s not bad to be neurotic. It means that you are a little bit more emotional. It means, unfortunately, as well, that you are motivated by fear-based messaging, so people can use tactics in order to scare you to doing what they want to do.

And this is what was targeted when they were gathering that data out of Facebook to figure out which group you belonged into. They found about 32 different groups of people, different personality types. And there were groups of psychologists that were looking into how they could understand that data and convert that into messaging that was just for you.

I need to remind everybody that the Trump campaign put together over a million different advertisements that were put out, a million different advertisements with tens of thousands of different campaigns. Some of these messages were for just you, were for 50 people, a hundred people. Obviously, certain groups are thousands, tens of thousands or millions. But some of them were targeted very much directly at the individual, to know exactly what you’re going to click on and exactly what you care about.

AMY GOODMAN: So they were doing this before Cambridge Analytica. But describe — I want to actually go to a Bannon clip, Steve Bannon, who takes credit for naming Cambridge Analytica, right? Because you had SCL before, Defence.

BRITTANY KAISER: Yes.

AMY GOODMAN: And then it becomes Cambridge Analytica, for Cambridge University, right? Where Kogan got this information that he culled from Facebook.

BRITTANY KAISER: Yes.

AMY GOODMAN: This is the White House chief strategist Steve Bannon in an interview at a Financial Times conference in March 2018. Bannon said that reports that Cambridge Analytica improperly accessed data to build profiles on American voters and influence the 2016 presidential election were politically motivated. Months later, evidence emerged linking Bannon to Cambridge Analytica, the scandal, which resulted in a $5 billion fine for Facebook. Bannon is the founder and former board member of the political consulting firm — he was vice president of Cambridge Analytica.

STEPHEN BANNON: All Cambridge Analytica is the data scientists and the applied applications here in the United States. It has nothing to do with the international stuff. The Guardian actually tells you that, and The Observer tell you that, when you get down to the 10th paragraph, OK? When you get down to the 10th paragraph. And what Nix does overseas is what Nix does overseas. Right? It was a data — it was a data company.

And by the way, Cruz’s campaign and the Trump campaign say, “Hey, they were a pretty good data company.” But this whole thing on psychographics was optionality in the deal. If it ever worked, it worked. But it hasn’t worked, and it doesn’t look like it’s going to work. So, it was never even applied.

AMY GOODMAN: So, that’s Steve Bannon in 2018, key to President Trump’s victory and to his years so far in office, before he was forced to — before he was forced out. What was your relationship with Steve Bannon? You worked at Cambridge Analytica for over three years. You had the keys to the castle, is that right, in Washington?

BRITTANY KAISER: Yes, for a while I actually split the keys to what is Steve’s house, with Alexander Nix, because we used his house as our office. His house is also used as a Breitbart office in the basement. It’s called the “Breitbart Embassy” on Capitol Hill. And that’s where I would go for meetings.

AMY GOODMAN: Who funded that?

BRITTANY KAISER: I believe it was owned by the Mercer family, that building. And we would come into the basement and use that boardroom for our meetings. And we would use that for planning who we were going to go pitch to, what campaigns we were going to work for, what advocacy groups, what conservative 501(c)(3)s and (c)(4)s he wanted us to go see.

And I didn’t spend a lot of time with Steve, but the time I did was incredibly insightful. Almost every time I saw him, he’d be showing me some new Hillary Clinton hit video that he had come out with, or announcing that he was about to throw a book launch party for Ann Coulter for ¡Adios, America!, which was something that he invited both me and Alexander to, and we promptly decided to leave the house before she arrived.

But Steve was very influential in the development of Cambridge Analytica and who we were going to go see, who we were going to support with our technology. And he made a lot of the introductions, which in the beginning seemed a little less nefarious than they did later on, when he got very confident and started introducing us to white right-wing political parties across Europe and in other countries and tried to get meetings with the main political parties, or leftist or green parties instead, to make sure that those far-right-wing parties that do not have the world’s best interests at heart could not get access to these technologies.

AMY GOODMAN: You said in The Great Hack, in the film, that you have evidence of illegality of the Trump and Brexit campaigns, that they were conducted illegally. I was wondering if you can go into that. I mean, it was controversial even, and Carole Calwalladr, the great reporter at The Observer and The Guardian, was blasted and was personally targeted, very well demonstrated in The Great Hack, for saying that Cambridge Analytica was involved in Brexit. They kept saying they had nothing to do with it, until she shows a video of you, who worked for Cambridge Analytica, at one of the founding events of leave it, or Brexit.

BRITTANY KAISER: Yeah, Leave.EU, that panel that I was on, which has now become quite an infamous video, was their launch event to launch the campaign. And Cambridge Analytica was in deep negotiations, through introduction of Steve Bannon, with both of the Brexit campaigns. I was told, actually, originally we pitched remain, and the remain side said that they did not need to spend money on expensive political consultants, because they were going to win anyway. And that’s actually what I also truly believed, and so did they.

So, Steve made the introductions to make sure that we would still get a commercial contract out of this political campaign, and both to Vote Leave and Leave.EU. Cambridge Analytica took Leave.EU, and AIQ, which was Cambridge Analytica’s essentially digital partner, before Cambridge Analytica could run our own digital campaigns, they were running the Vote Leave side, both funded by the Mercers, both with the same access to this giant database on American voters.

AMY GOODMAN: The Mercers funded Brexit?

BRITTANY KAISER: There was Cambridge Analytica work, as well as AIQ work, in both of the leave campaigns. So, a lot of that money, in order to collect that data and in order to build the infrastructure of both of those companies, came from Mercer-funded campaigns, yes.

AMY GOODMAN: And again, explain what AIQ is.

BRITTANY KAISER: AIQ was a company that actually ran all of Cambridge Analytica’s digital campaigns, until January 2016, when Molly Schweickert, our head of digital, was hired in order to build ad tech internally within the company. AIQ was based in Canada and was a partner that had access to Cambridge Analytica data the entire time that they were running the Vote Leave campaign, which was the designated and main campaign in Brexit.

AMY GOODMAN: So, when did you see the connection between Brexit and the Trump campaign?

BRITTANY KAISER: Actually, a lot of it started to come when I saw some of Carole’s reporting, because there were a lot of conspiracy theories over what was going on, and I didn’t know what to believe. All I knew was that we definitely did work in the Brexit campaign, “we” as in when I was at Cambridge Analytica, because I was one of the people working on the campaign. And we obviously played a large role in not just the Trump campaign itself, but Trump super PACs and a lot of other conservative advocacy groups, 501(c)(3)s, (4)s, that were the infrastructure that allowed for the building of the movement that pushed Donald Trump into the White House.

AMY GOODMAN: I mean, it looks like Cambridge Analytica was heading to a billion-dollar corporation.

BRITTANY KAISER: That’s what Alexander used to tell us all the time. That was the carrot that he waved in front of our eyes in order to have us keep going. “We’re building a billion-dollar company. Aren’t you excited?” And I think that that’s what so many people get caught up in, people that are currently working at Facebook, people that are working at Google, people that are working at companies where they are motivated to build exciting technology, that obviously can also be very dangerous, but they think they’re going to financially benefit and be able to take care of themselves and their families because of it.

AMY GOODMAN: So what was illegal?

BRITTANY KAISER: The massive problems that came from the data collection, specifically, are where my original accusations come from, because data was collected under the auspices of being for academic research and was used for political and commercial purposes. There are also different data sets that are not supposed to be matched and used without explicit transparency and consent in the United Kingdom, because they actually have good national data protection laws and international data protection laws through the European Union to protect voters. Unfortunately, in the United States, we only have seen the state of California coming out and doing it.

Now, on the other side, we have voter suppression laws that prevent our vote from being suppressed. We have laws against discrimination in advertising, racism, sexism, incitement of violence. All of those things are illegal, yet somehow a platform like Facebook has decided that if politicians want to use any of those tactics, that they will not be held to the same community standards as you or me, or the basic laws and social contracts that we have in this country.

AMY GOODMAN: Karim Amer and Jehane Noujaim, I was wondering if you can talk about — Brittany sparked this when she talked about voter suppression — Trinidad and Tobago, which you go into in your film, because ultimately the elections there were about voter suppression and trying to get whole populations not to vote.

KARIM AMER: Yeah, I think it was important for us to show in the film the expansiveness of Cambridge’s work. This went beyond the borders of the United States and even beyond the borders of the EU and the U.K. Because what we find is that Cambridge used the — in pursuing this global influence industry that they were very much a part of, they used different countries as Petri dishes to learn and get the know-how about different tactics. And from improving those tactics, they could then sell them for a higher cost — higher margin in Western democracies, where the election budgets are, you know — we have to remember, I think it’s important to predicate that the election business has become a multibillion-dollar global business, right? So, we have to remember that while we are upset with companies like Cambridge, we allowed for the commoditization of our democratic process, right? So, people are exploiting this now because it’s become a business. And we, as purveyors of this, can’t really be as upset as we want to be, when we’ve justified that. So I want to preface it with that.

Now, that being said, what’s happened as a result is a company like Cambridge can practice tactics in a place like Trinidad, that’s very unregulated in terms of what they can and can’t do, learn from that know-how and then, you know, use it — parlay it into activities in the United States. What they did in Trinidad, and why it was important for us to show it in the film, is they led something called the “Do So” campaign, where they admit to making it cool and popular among youth to get out and not vote. And they knew —

AMY GOODMAN: So, you had the Indian population and the black population.

KARIM AMER: And the black population. And there is a lot of historic tension between those two, and a lot of generational differences, as well, between those two. And the “Do So” campaign targeted — was was done in a way to, you know, by looking at the data and looking at the predictive analysis of which group would vote or not vote, get enough people to dissuade them from voting, so that they could flip the election.

AMY GOODMAN: Targeted at?

KARIM AMER: Targeted at the youth. And so, this is really — when you watch —

AMY GOODMAN: “Do So” actually meant “don’t vote.”

KARIM AMER: “Do So,” don’t vote.

JEHANE NOUJAIM: Don’t vote.

KARIM AMER: Yes, exactly. And when —

AMY GOODMAN: With their fists crossed.

KARIM AMER: With their fists.

AMY GOODMAN: And that it became cool not to vote.

KARIM AMER: Exactly. And you look at the level of calculation behind this, and it’s quite frightening. Now, as Emma was saying, a lot of these tactics were born out of our own fears in the United States and the U.K. post-9/11, when we allowed for this massive weaponization of influence campaigns to begin. You know, if you remember President Bush talking about, you know, the battle for the hearts and minds of the Iraqi people, all of these kinds of industries were born out of this.

And now I believe what we’re seeing is the hens have come home to roost, right? All of these tactics that we developed in the name of, quote-unquote, “fighting the war on terror,” in the name of doing these things, have now been commercialized and used to come back to the biggest election market in the world, the United States. And how do we blame people for doing that, when we’ve allowed for our democracy to be for sale?

And that’s what Brittany’s files today, that she’s releasing and has released over the last couple days, really give us insight to. The Hindsight Files that Brittany has released show us how there is an auction happening for influence campaigns in every democracy around the world. There is no vote that is unprotected in the current way that we — in the current space that we’re living.

And the thing that’s allowing this to happen is these information platforms like Facebook. And that is what’s so upsetting, because we can actually do something about that. We are the only country in the world that can hold Facebook accountable, yet we still have not done so. And we still keep going to their leadership hoping they do the right thing, but they have not. And why is that? Because no industry has ever shown in American history that it can regulate itself. There is a reason why antitrust laws exist in this country. There’s a tradition of holding companies accountable, and we need to re-embrace that tradition, especially as we enter into 2020, where the stakes could not be higher.

AMY GOODMAN: Jehane, you want to talk about the issue of truth?

JEHANE NOUJAIM: Yes. I think that what’s under attack is the open society and truth within it. And that underlies every single problem that exists in the world, because if we don’t have an understanding of basic facts and can have nuanced debate, our democracies are destroyed.

AMY GOODMAN: So, let’s go to this issue of truth. In October, as Facebook said it will not fact-check political ads or hold politicians to its usual content standards, the social media giant’s CEO Mark Zuckerberg was grilled for more than five hours by lawmakers on Capitol Hill on Facebook policy of allowing politicians to lie in political advertisements, as well as its role in facilitating election interference and housing discrimination. Again, this is New York Congressmember Alexandria Ocasio-Cortez grilling Mark Zuckerberg at that hearing.

REP. ALEXANDRIA OCASIO-CORTEZ: Could I run ads targeting Republicans in primaries, saying that they voted for the Green New Deal?

MARK ZUCKERBERG: Sorry, I — can you repeat that?

REP. ALEXANDRIA OCASIO-CORTEZ: Would I be able to run advertisements on Facebook targeting Republicans in primaries, saying that they voted for the Green New Deal? I mean, if you’re not fact-checking political advertisements, I’m just trying to understand the bounds here, what’s fair game.

MARK ZUCKERBERG: Congresswoman, I don’t know the answer to that off the top of my head. I think probably?

REP. ALEXANDRIA OCASIO-CORTEZ: So you don’t know if I’ll be able to do that.

MARK ZUCKERBERG: I think probably.

REP. ALEXANDRIA OCASIO-CORTEZ: Do you see a potential problem here with a complete lack of fact-checking on political advertisements?

MARK ZUCKERBERG: Well, Congresswoman, I think lying is bad. And I think if you were to run an ad that had a lie, that would be bad. That’s different from it being — from — in our position, the right thing to do to prevent your constituents or people in an election from seeing that you had lied.

REP. ALEXANDRIA OCASIO-CORTEZ: So, we can — so, you won’t take down lies, or you will take down lies? I think this is just a pretty simple yes or no.

AMY GOODMAN: That’s Congressmember Alexandria Ocasio-Cortez questioning Mark Zuckerberg at a House hearing. Brittany Kaiser, you also testified in Congress. You testified in the British Parliament. And as you watch Mark Zuckerberg, you also wrote a piece that says, “How much of Facebook’s revenue comes from the monetization of users’ personal data?” Talk about what AOC was just asking Zuckerberg, that he wouldn’t answer.

BRITTANY KAISER: Absolutely. So, the idea that politicians, everything that they say is newsworthy is something that Mark Zuckerberg is trying to defend as a defense of free speech. But you know what? My right to free speech ends where your human rights begin. I cannot use my right to free speech in order to discriminate against you, use racism, sexism, suppress your vote, incite violence upon you. There are limits to that. And there are limits to that that are very well and obviously enshrined in our laws.

Unfortunately, Mark Zuckerberg doesn’t seem to understand that. He is going on TV and talking about how the most important thing that he’s investing in is stopping foreign intervention in our elections. Fantastic. I definitely applaud that. But guess what. Russia only spent a couple hundred thousand dollars on intervening in the 2016 U.S. elections, whereas the Trump campaign spent billions amongst the PACs and the campaign and different conservative groups. So, you know what? The biggest threat to democracy is not Russia; the biggest threat is domestic.

AMY GOODMAN: So, keep on that front and how it’s operating and how we’re seeing it even continue today.

BRITTANY KAISER: Yes. So, I think it’s been very obvious that some of the campaign material that has come out is disinformation. It is fake news. And there are well-principled networks on television that refuse to air these ads. Yet somehow Facebook is saying yes.

And that is not only a disaster, but it’s actually completely shocking that we can’t agree to a social standard of what we are going to accept. Facebook has signed the contract for the internet. Facebook has said that they believe in protecting us in elections. But time and time again, we have seen that that’s not actually true in their actions.

They need to be investing in human capacity and in technology, AI, in order to identify disinformation, fake news, hatred, incitement of violence. These are things that can be prevented, but they’re not making the decision to protect us. They’re making the decision to line their pockets with as much of our value as possible.

AMY GOODMAN: Can you talk about the “Crooked Hillary” campaign and how it developed?

BRITTANY KAISER: Absolutely. So, this started as a super PAC that was built for Ted Cruz, Keep the Promise I, which was run by Kellyanne Conway and funded by the Mercers. That was then converted to becoming a super PAC for Donald Trump. They tried to register with the Federal Election Commission the name, Defeat Crooked Hillary, and the FEC, luckily, did not allow them to do that. So it was called Make America Number 1.

This super PAC was headed by David Bossie, someone that you might remember from Citizens United, who basically brought dark money into our politics and allowed endless amounts of money to be funneled into these types of vehicles so that we don’t know where all of the money is coming from for these types of manipulative communications. And he was in charge of this campaign.

Now, on that two-day-long debrief that I talked about — and if you want to know more, you can read about it in my book — they told us —

AMY GOODMAN: Wait, and explain where you were and who was in the room.

BRITTANY KAISER: So, I was in New York in our boardroom for Cambridge Analytica’s office on Fifth Avenue. And all of our offices from around the world had called in to videocast. And everybody from the super PAC and the Trump campaign took us through all of their tactics and strategies and implementation and what they had done.

Now, when we got to this Defeat Crooked Hillary super PAC, they explained to us what they had done, which was to run experiments on psychographic groups to figure out what was working and what wasn’t. Unfortunately, what they found out was the only very successful tactic was sending fear-based, scaremongering messaging to people that were identified as being neurotic. And it was so successful in their first experiments that they spent the rest of the money from the super PAC over the rest of the campaign only on negative messaging and fearmongering.

AMY GOODMAN: And crooked, the O-O in “crooked” was handcuffs.

BRITTANY KAISER: Yes. That was designed by Cambridge Analytica’s team.

AMY GOODMAN: Karim?

KARIM AMER: And one thing that I think it’s important to remember here, because there’s been a lot of debate among some people about: Did this actually work? To what degree did it work? How do we know whether it worked or not? What Brittany is describing is a debrief meeting where Cambridge, as a company, is saying, “This is what we learned from our political experience. This is what actually worked.” OK? And they’re sharing it because they’re saying, “Now this is how we want to codify this and commoditize this to go into commercial business.” Right?

So this is the company admitting to their own know-how. There is no debate about whether it works or not. This is not them advertising it to the world. This is them saying, “This is what we’ve learned. Based off that, this is how we’re going to run our business. This is how we’re going to invest in the expansion of this to sell this outside of politics.” The game was, take the political experience, parlay it into the commercial sector. That was the strategy. So, there is no debate whether it worked or not. It was highly effective.

And the thing that’s terrifying is that while Cambridge has been disbanded, the same actors are out there. And there’s nothing has been — nothing has changed to allow us to start putting in place legislation to say there is something called information crimes. In this era of information warfare, in this era of information economies, what is an information crime? What does it look like? Who determines it? And yet, without that, we are still living in this unfiltered, unregulated space, where places like Facebook are continuing to choose profit over the protection of the republic. And I think that’s what’s so outrageous.

JEHANE NOUJAIM: And I think it’s pretty telling that only two people —

AMY GOODMAN: Jehane.

JEHANE NOUJAIM: Only two people have come forward from Cambridge Analytica. Why is that? Both of the people that have come forward, Brittany and Chris, and also with Carole’s writing, have been targeted personally. And it’s been a very, very difficult story to tell. Even with us, when we released the film in January, every single time we have entered into the country, we have been stopped for four to six hours of questioning at the border. That —

AMY GOODMAN: Stopped by?

JEHANE NOUJAIM: Stopped by — on the border of the U.S., in JFK Airport, where you’re taken into the back, asked for all of your social media handles, questioned for four to six hours, every single time we enter the country. So —

AMY GOODMAN: Since when?

JEHANE NOUJAIM: Since we released the film, so since Sundance, since January, every time we’ve come back into the U.S.

AMY GOODMAN: And on what grounds are they saying they’re stopping you?

JEHANE NOUJAIM: No explanation. No —

AMY GOODMAN: And what is your theory?

JEHANE NOUJAIM: My theory is that it’s got something to do with this film. Maybe we’re doing something right. We were at first — we’ve been stopped in Egypt, but we’ve never been stopped in the U.S. in this way. We’re American citizens. Right?

AMY GOODMAN: You talk about people coming forward and not coming forward. I wanted to turn to former Cambridge Analytica COO, the chief operating officer, Julian Wheatland, speaking on the podcast Recode Decode.

JULIAN WHEATLAND: The company made some significant mistakes when it came to its use of data. They were ethical mistakes. And I think that part of the reason that that happened was that we spent a lot of time concentrating on not making regulatory mistakes. And so, for the most part, we didn’t, as far as I can tell, make any regulatory mistakes, but we got almost distracted by ticking those boxes of fulfilling the regulatory requirements. And it felt like, well, once that was done, then we’d done what we needed to do. And we forgot to pause and think about ethically what was — what was going on.

AMY GOODMAN: So, if you could decode that, Brittany? Cambridge Analytica COO Julian Wheatland, who, interestingly, in The Great Hack, while he was — really condemned Chris Wylie, did not appreciate Chris Wylie stepping forward and putting Cambridge Analytica in the crosshairs in the British Parliament, he was more equivocal about you. He — talk about Wheatland and his role and what he’s saying about actually abiding by the regulations, which they actually clearly didn’t.

BRITTANY KAISER: Once upon a time, I used to have a lot of respect for Julian Wheatland. I even thought we were friends. I thought we were building a billion-dollar company together that was going to allow me to do great things in the world. But, unfortunately, that’s a story that I told myself and a story he wanted me to believe that isn’t true at all.

While he likes to say that they spent a lot of time abiding by regulations, I would beg to differ. Cambridge Analytica did not even have a data protection officer until 2018, right before they shut down. I begged for one for many years. I begged for more time with our lawyers and was told I was creating too many invoices. And for a long time, because I had multiple law degrees, I was asked to write contracts. And so were other —

AMY GOODMAN: Didn’t you write the Trump campaign contract?

BRITTANY KAISER: The original one, yes, I did. And there were many other people that were trained in human rights law in the company that were asked to draft contracts, even though contract law was not anybody’s specialty within the company. But they were trying to cut corners and save money, just like a lot of technology companies decide to do. They do not invest in making the ethical or legal decisions that will protect the people that are affected by these technologies.

AMY GOODMAN: I wanted to bring Emma Briant back into this conversation. When you hear Julian Wheatland saying, “We abided by all regulations,” talk about what they violated. Ultimately, Cambridge Analytica was forced to disband. Was Alexander Nix criminally indicted?

EMMA BRIANT: I think that the investigations into what Alexander Nix has done will continue and that the revelations that will come from the Hindsight Files will result, I think, in more criminal investigations into this network of people. I think that it’s deeply worrying, the impact that they’ve had on — well, in terms of security, but also in terms of, you know, the law breaking that has happened in respect to data privacy. What was done with Facebook, in particular, is deeply worrying. But you look at what has been revealed in these files, and you start to realize that, actually, there’s a hell of a lot more going on behind the scenes that we don’t know.

And what Julian Wheatland is covering for is his central role in that company, which was in charge of all of the finances. Now, they were covering up wrongdoing repeatedly throughout their entire history. And that goes back way before Chris Wylie or Brittany Kaiser had joined the companies. I’ve seen documents which are evidencing things like cash payments and the use of shell companies to cover things up. It’s deeply, deeply disturbing. And I think the fact that Julian Wheatland is trying to shift blame onto the academics or just onto Facebook — because, you know, obviously Facebook has a massive culpability and has received multiple fines for its own role. However, it’s not just about Facebook, and it’s not just about those academics who were doing things wrong. It’s actually about Wheatland himself and about these people in charge of the company who were making the massive decisions that have affected all of us.

AMY GOODMAN: What happened with Professor Kogan?

EMMA BRIANT: Well, I think this is a really important aspect of it. The academics —

AMY GOODMAN: He was the Cambridge University professor that Chris Wylie went to —

EMMA BRIANT: Yes, he was —

AMY GOODMAN: — to say, “Help us scrape the data of, what, 80 million Facebook users,” under the guise of academic research that Brittany was just describing.

EMMA BRIANT: Exactly. And there’s still a little bit of a lack of clarity over the role of Kogan with respect to his — the Ph.D. student at the time, Michal Kosinski, who was also working on this kind of — the analysis of the Facebook data and its application to the personality quiz, the OCEAN personality quiz, which Brittany Kaiser talked about.

But, basically, with Joseph Chancellor, Kogan set up a new company, which would commercialize the data that have been obtained for their research. But, of course, this was known to Facebook. We are starting to realize that they knew way earlier what was going on. And it was being sold to Cambridge Analytica for the purposes of the U.S. elections. And, of course, Cambridge Analytica were also using it for their military technologies, the development of their information warfare techniques, which, by the way, they were also hoping to get many more defense contracts out of the winning of the U.S. election, and then, of course, apply it into commercial practices. And, you know, the ways in which they —

AMY GOODMAN: I wanted to stop you there. I just wanted to go to this point —

EMMA BRIANT: Oh, yeah.

AMY GOODMAN: — because you mentioned it in Part 1 of our discussion —

EMMA BRIANT: Of course.

AMY GOODMAN: — this issue —

EMMA BRIANT: Sure.

AMY GOODMAN: — of military contractors —

EMMA BRIANT: Yeah.

AMY GOODMAN: — and the nexus of military and government power, the fact that with Trump’s election —

EMMA BRIANT: Yeah.

AMY GOODMAN: — military contractors were one of the greatest financial beneficiaries of Trump’s election.

KARIM AMER: But I think it’s important to remember that —

EMMA BRIANT: Absolutely.

AMY GOODMAN: Karim Amer.

KARIM AMER: — the issue is that also these — when we think of military contractors, we think of people selling tanks and guns and bullets and these types of things. The problem that we don’t realize is that we’re in an era of information warfare. So the new military contractors aren’t selling — aren’t selling the traditional tanks. They’re selling the —

AMY GOODMAN: Although they’re doing that.

KARIM AMER: They’re doing that, as well, but they’re selling the equivalent of that in the information space. And that’s a new kind of weapon. That’s a new kind of battle that we’re not familiar with.

And the reason why it’s more challenging for us is because there’s a deficit of language and a deficit of visuals. We don’t know where the battlefield is. We don’t know where the borders are. We can’t pinpoint, be like, “This is where the trenches are.” Yet we’re starting to uncover that. And that was so much of the challenge in making this film, is trying to see where can we actually show you where these wreckage sites are, where the casualties of this new information warfare are, and who the actors are and where the fronts are.

And I think, in entering 2020, we have to keep a keen eye on where the new war fronts are and when they’re happening in our domestic frontiers and how they’re happening in these devices that we use every day. So this is where we have to have a new kind of reframing of what we’re looking at, because while we are at war, it is a very different kind of borderless war where asymmetric information activity can affect us in ways that we never imagined.

AMY GOODMAN: And, Emma Briant, you talked about when Facebook knew the level of documentation that Cambridge Analytica was taking from them.

EMMA BRIANT: Yeah.

AMY GOODMAN: I mean, Cambridge Analytica paid them, right?

EMMA BRIANT: Yes. I mean, they were providing the data to GSR, who then, you know, were paid by —

AMY GOODMAN: Explain what GSR is.

EMMA BRIANT: Sorry, the company by Kogan and Joseph Chancellor, their company that they were setting up to do both academic research but also to exploit the data for Cambridge Analytica’s purposes. So they were working with — on mapping that data onto the personality tests and giving that access to Cambridge Analytica, so that they could scale it up to profile people across the target states in America especially, but also all across America. They obtained way more than they ever expected, as Chris Wylie and Brittany have shown.

But I want to also ask: When did our governments know about what Cambridge Analytica and SCL were doing around the world and when they were starting to work in our elections? One of the issues is that these technologies have been partly developed by, you know, grants from our governments and that these were, you know, defense contractors, as we say. We have a responsibility for those companies and for ensuring that there’s reporting back on what they’re doing, and some kind of transparency.

As Karim was saying, that if you — you know, we’re in a state of global information warfare now. If you have a bomb that has been discovered that came from an American source and it’s in Yemen, then we can look at that bomb, and often there’s a label which declares that it’s an American bomb that has been bought, that has, you know, been used against civilians. But what about data? How do we know if our militaries develop technologies and the data that it has gathered on people, for instance, across the Middle East, the kind of data that Snowden revealed — how do we know when that is turning up in Yemen or when that is being utilized by an authoritarian regime against the human rights of its people or against us? How do we know that it’s not being manipulated by Russia, by Iran, by anybody who’s an enemy, by Saudi Arabia, for example, who SCL were also working with? We have no way of knowing, unless we open up this industry and hold these people properly accountable for what they’re doing.

AMY GOODMAN: Let me ask you about Cambridge University, Emma Briant.

EMMA BRIANT: Sure.

AMY GOODMAN: We’re talking about Cambridge professors, but how did Cambridge University — or, I should say, did it profit here?

EMMA BRIANT: I think the issue is that a lot of academics believe that — you know, I mean, their careers are founded on the ability to do their research. They are incentivized to try and make real-world impacts. And in the United Kingdom, we have something called the Research Evaluation Framework. That’s building in more and more the requirement to show that you have had some kind of impact in the real world. All academics are being — feeling very much like they want to be engaged in industry. They want to be engaged in — not just in lofty towers and considered an elite, but working in partnerships with people having an impact in real campaigns and so on. And I think there’s a lot of incentivization also to make a little money on the side with that.

And the issue is that it’s not just actually Kogan who was involved working with Cambridge Analytica. There were many academics. And, yes, universities make — you know, are able to profit off these kinds of relationships, because that is the global impact of their internationally renowned academics. Unfortunately, I think that there isn’t built into that enough accountability and consideration of ethics.

And there is too great an ability to say, “Oh, but that’s not really part of my academic work,” when it’s something that’s a negative outcome. So, for instance, I recently revealed in Oxford University a professor who had been working with SCL, too, and there are other academics who were working with SCL all around the world who benefit in their careers from doing this. But then, when it’s revealed that there’s some wrongdoing, you know, it’s taken off the CV.

Now, I think universities have a great responsibility to be not profiting from this, to be transparent about it. They’re presenting themselves as ethical institutions that are, you know, teaching students. And what if those staff are actually, you know, engaged in nefarious activities? The problem with Cambridge University is they’ve also covered up this. And, you know, Oxford University, when I tried to challenge them, as well, have also tried to cover this up. And we don’t know how many other universities are also doing this.

And the universities also want to expand the number of students they’ve got, and they will work with firms like Cambridge Analytica. Sheffield University were named in the documents that Brittany Kaiser has released. I’ve been chasing them with Freedom of Information requests to find out whether or not they actually were a client of Cambridge Analytica. And it turns out that they had deleted the presentation and the emails that they had an exchange with the company.

Now, the lack of openness about decision-making about student recruitment is disturbing, because it looks like Sheffield didn’t partner with Cambridge Analytica, but other universities did. And that’s enabling that company to then have access to students’ data, which, potentially, if they are in mind to abuse it, could be used for political targeting. So, if you imagine American universities are coming forward and working with a lot of, you know, data-driven companies to try to improve their outreach to gather more students to look for — like, what they were doing in the past is looking for look-alike audiences and so on — in order to, you know, increase their student numbers —

AMY GOODMAN: Emma Briant —

EMMA BRIANT: And all of this data is being given across to these companies that have no transparency at all.

AMY GOODMAN: Emma Briant, before I go back to Brittany on this issue of the new Hindsight Files, I wanted to ask you what you found most interesting about them, though she is just releasing them now, since the beginning of the year, so there is an enormous amount to go through.

EMMA BRIANT: Yes.

AMY GOODMAN: It involves scores of countries all over the world.

EMMA BRIANT: To me, I think the biggest reveal is going to be in the American campaigns around this, but I think you haven’t seen the half of it yet. This is the tip of the iceberg, as I’ve been saying.

My thing that I think that is most interesting of what’s been revealed so far is actually the Iran campaign, because, you know, this is a very complex issue, and it really is an exemplar of the kinds of conflicts of interest that I’m talking about, at a company that is, you know, set out to profit from the arms trade and from the expansion of war in that region and from the favoring of one side in a regional conflict, essentially, backed by American power, by the escalation of the conflict with Iran and, you know, by getting more contracts, of course, with the Gulf states, the UAE and the Saudis. You know, and, of course, they were trying to put Trump in power, as well, to do that, and advancing John Bolton and the other hawks who have been trying to demand that sanctions — to keep sanctions and to get out of the Iran deal, which they have been arguing is a flawed deal.

And, of course, SCL were involved in doing work in that region since 2013, including they were working before that on Iran for President Obama’s administration, which I’m going to be talking more about in the future. The issue is that there is a conflict of interest here. So you gain experience for one government, and then you’re going and working for others that maybe are not entirely aligned in their interests. Thank you.

AMY GOODMAN: Well, I mean, let’s be clear that all of this happened — although it was for the election of Donald Trump, of course, it happened during the Obama years.

EMMA BRIANT: Yes.

AMY GOODMAN: That’s when Cambridge Analytica really gained its strength in working with Facebook.

EMMA BRIANT: Yeah. And SCL’s major shareholder, Vincent Tchenguiz, of course, was involved in the early establishment of the company Black Cube and in some of its early funding, I believe. I don’t know how long they stayed in any kind of relationship with that firm. However, the firm Black Cube were also targeting Obama administration officials with a massive smear campaign, as has been revealed in the media. And, you know, this opposition to the Iran deal and the promotion of these kinds of, you know, really fearmongering advertising that Brittany is talking about is very disturbing, when this same company is also driving, you know, advertising for gun sales and things like that.

AMY GOODMAN: Wait. Explain what Black Cube is, which goes right to today’s headlines —

EMMA BRIANT: Exactly.

AMY GOODMAN: — because Harvey Weinstein, accused of raping I don’t know how many women at last count, also employed Black Cube, former Israeli intelligence folks, to go after —

EMMA BRIANT: Yes.

AMY GOODMAN: — the women who were accusing him, and even to try to deceive the reporters, like at The New York Times, to try to get them to write false stories.

EMMA BRIANT: Absolutely. I mean, this is an intelligence firm that was born, again, out of the “war on terror.” So, Israel’s war on terror, this time, produced an awful lot of people who had gone through conscription and developed really, you know, strong expertise in cyberoperations or on developing information warfare technologies, in general, intelligence gathering techniques. And Black Cube was formed by people who came out of the Israeli intelligence industries. And they all formed these companies, and this has become a huge industry, which is not really being properly regulated, as well, and properly governed, and seems to be rather out of control. And they have been also linked to Cambridge Analytica in the evidence to Parliament. So, I think the involvement of all of these companies is really disturbing, as well, in relation to the Iran deal.

We don’t know that Cambridge Analytica in any way were working with Black Cube in this, at this point in time. However, the fact is that all of this infrastructure has been created, which is not being properly tackled. And how they’re able to operate without anybody really understanding what’s going on is a major, major problem.

We have to enact policies that open up what Facebook and other platforms are doing with our data, and make data work for our public good, make it impossible for these companies to be able to operate in such an opaque manner, through shell companies and so forth and dark money being funneled through, you know, systems, political communication systems, and understand that it’s not just about us and our data and as individuals. We have a public responsibility to others. If my data is being used in order to create a model for voter suppression of someone else, I have a responsibility to that. And it’s not just about me and whether or not I consent to that. If I’m consenting to somebody else’s voter suppression, my data to be used for that purpose, that’s also unacceptable. So what we really need is an infrastructure that protects us and ensures that that can’t be possible.

AMY GOODMAN: Brittany, I wanted to go back to this data dump that you’re doing in this release of documents that you’ve been engaged in since the beginning of the year. Brittany Kaiser, you worked for Cambridge Analytica for more than three years, so these documents are extremely meaningful. Emma Briant just mentioned Black Cube. Who else did Black Cube work with?

BRITTANY KAISER: I don’t know about Black Cube, but I do know that there were Israeli intelligence firms that were cooperating with Cambridge Analytica and with some of Cambridge Analytica’s clients around the world, and not just former Israeli intelligence officers, but intelligence officers from many other countries. That’s concerning, but also Black Cube isn’t the only company you should be concerned about.

The founder of Blackwater, or their CEO, Erik Prince, was also an investor in Cambridge Analytica. So he profits from arm sales around the world, and military contracts, and has been accused of causing the unnecessary death of civilians in very many different wartime situations. He was one of the investors in Cambridge Analytica and their new company, Emerdata. And so, I should be very concerned, and everyone should be very concerned, about the weaponization of our data by people that are actually experts in selling weapons. So, that’s one thing that I think needs to be in the public discussion, the difference between what is military, what is civilian, and how those things can be used for different purposes or not.

AMY GOODMAN: Were you there with Erik Prince on inauguration night? He was there on election night with Donald Trump.

BRITTANY KAISER: I suppose he was there. That has been reported. But I’ve never met the man. I have never spoken to him, nor do I ever wish to, to be honest.

So, now that — I really want to address back your question about the files that I’m releasing. I started this on New Year’s because I have no higher purpose than to get the truth out there. People need to know what happened. And for the pieces of the puzzle that I do not have, I do hope that investigative journalists and citizen journalists and individuals around the world, as well as investigators that are still working with these documents, can actually help bring people to justice.

I’m not the only person who’s releasing files. I’d like to draw attention to the Hofeller Files, which have also been released recently by the daughter of Thomas Hofeller, who was a GOP strategist. And she just released a massive tranche of documents the other day that show the voter suppression tactics of minorities used by Republicans around the United States. So, that is extra evidence, on top of what I’ve already released, on voter suppression.

I know that this concerns quite a lot of people because of the amount of cyberattacks that have been happening on our files that are currently hosted on Twitter. People are trying desperately to take those down. They’re not going to, because I have some of the best cybersecurity experts in the world keeping them up there. But I want to say to anyone that’s been trying to take them down: You cannot take them down, and you won’t be able to stop the release of the rest of the documents, because the information is decentralized around the world in hundreds of locations, and these files are coming out whether you like it or not.

AMY GOODMAN: In talking about Thomas Hofeller, these documents revealing that this now-dead senior Republican strategist, who specialized in gerrymandering, was secretly behind the Trump administration’s efforts to add a citizenship question in the 2020 census. He had been called the “Michelangelo of gerrymandering.” When he died in 2018, he left behind this computer hard drive full of his notes and records, and his estranged daughter found among the documents a 2015 study that said adding the citizenship question to the census, quote, “would be advantageous to Republicans and non-Hispanic whites” and “would clearly be a disadvantage to the Democrats.” That’s what she is releasing in the Hofeller Files.

BRITTANY KAISER: Absolutely. And some of the evidence that I have in the Hindsight Files corroborates those types of tactics. Voter suppression, unfortunately, is a lot cheaper than getting people to register and turn out to the polls. And that is so incredibly sad. Roger McNamee actually talks about this quite often now, about the gamification of Facebook and how much hatred and negativity is cheaper and easier and more viral, and what that actually means for informing the strategy of campaigns. And we need to be very aware of that and know that what we’re seeing is made to manipulate and influence us, not for our own interests.

AMY GOODMAN: Talk more about these files and what you understand is in them. For example, we’ve been talking about Facebook, and something, of course, brought out in The Great Hack, in the Oscar-shortlisted film, Facebook owns WhatsApp. WhatsApp was key in elections like in Brazil for the far-right President Jair Bolsonaro.

BRITTANY KAISER: Absolutely. And so, we have to realize that now that Facebook owns Instagram and WhatsApp, that the amount of data that they own about individuals around the world, behavioral data and real-time data, is absolutely unmatched. They are the world’s largest communications platform. They are the world’s largest advertising platform. And if they do not have the best interests of their users at heart, our democracies will never be able to succeed.

So, regulation of Facebook, perhaps even the breaking up of it, is the only thing that we can do at this moment, when Mark Zuckerberg and Sheryl Sandberg have decided to not take the ethical decision themselves. At this time, we cannot allow companies to be responsible for their own ethics and moral compass, because they’ve proven they cannot do it. We need to force them.

And anybody that has listened to this, please call your legislator. Tell them you care about your privacy. Tell them you care about your data rights. Tell them you care about your electoral laws being enforced online. You know what? Your representatives work for you. If you have any employees, do you not tell them what you want them to do every single day? Call your legislator. It takes a few minutes. Or even write them an email if you don’t want to pick up the phone. I beg of you. This is so incredibly important that we get through national legislation on these issues — national regulation. Just having California and New York lead the way is not enough.

JEHANE NOUJAIM: These are files that show over 68 countries, election manipulation in over 68 countries around the world. And it’s eerie when you — some of these are audio files — sitting, listening to audio, where inside the Trump campaign they are talking about how fear and hate engage people longer and is the best way to engage voters. And so, when you listen to that and listen to the research that has gone into that, all of a sudden it becomes clear why there is such division, why you see such hate, why you see such anger on our platforms.

KARIM AMER: And I think what’s important to see —

AMY GOODMAN: Karim.

KARIM AMER: — is that, you know, in the clip you showed, Chris Wylie is talking about how Cambridge is a full-service propaganda machine. What does that really mean? You know, I would say that what’s happening is we’re getting insight to the the network of the influence industry, the buying and selling of information and of people’s behavioral change. And it is a completely unregulated space.

And what’s very worrisome is that, as we’re seeing more and more, with what Emma’s talking about and what Brittany has shown, is this conveyor belt of military-grade information and research and expertise coming out of our defense work, that’s being paid for by our tax money, then going into the private sector and selling it to the highest bidder, with different special interests from around the world. So what you see in the files is, you know, a oil company buying influence campaign in a country that it’s not from and having no — you know, no responsibility or anything to what it’s doing there. And what happens in that, the results of that research, where it gets handed over to, no one knows. Any contracts that then result in the change that happens on the political ground, no one tracks and sees.

So, this is what we’re very concerned about, is because you’re seeing that everything has become for sale. And if everything is for sale —

JEHANE NOUJAIM: Our elections are for sale.

KARIM AMER: Exactly. And so, how do we have any kind of integrity to the vote, when we’re living in such a condition?

JEHANE NOUJAIM: Democracy has been broken. And our first vote is happening in 28 days, and nothing has changed. No election laws have changed. Facebook’s a crime scene. No research, nothing has come out. We don’t understand it yet. This was why we felt so passionate about making this film, because it’s invisible. How do you make the invisible visible? And this is why Brittany is releasing these files, because unless we understand the tactics, which are currently being used again, right now, as we speak, same people involved, then we can’t change this.

AMY GOODMAN: Facebook’s a crime scene, Jehane. Elaborate on that.

JEHANE NOUJAIM: Absolutely. Facebook is where this has happened. Initially, we thought this was Cambridge Analytica — right? — and that Cambridge Analytica was the only bad player. But Facebook has allowed this to happen. And they have not revealed. They have the data. They understand what has happened, but they have not revealed that.

AMY GOODMAN: And they have profited off of it.

JEHANE NOUJAIM: And they have profited off of it.

KARIM AMER: Well, it’s not just that they’ve profited off it. I think what’s even more worrisome is that a lot of our technology companies, I would say, are incentivized now by the polarization of the American people. The more polarized, the more you spend time on the platform checking the endless feed, the more you’re hooked, the more you’re glued, the more their KPI at the end of the year, which says number of hours spent per user on platform, goes up. And as long as that’s the model, then everything is designed, from the way you interact with these devices to the way your news is sorted and fed to you, to keep you on, as hooked as possible, in this completely unregulated, unfiltered way — under the guise of freedom of speech when it’s selectively there for them to protect their interests further. And I think that’s very worrisome.

And we have to ask these technology companies: Would there be a Silicon Valley if the ideals of the open society were not in place? Would Silicon Valley be this refuge for the world’s engineers of the future to come reimagine what the future could look like, had there not been the foundations of an open society? There would not be. Yet the same people who are profiting off of these ideals protecting them feel no responsibility in their preservation. And that is what is so upsetting. That is what is so criminal. And that is why we cannot look to them for leadership on how to get out of this.

We have to look at the regulation. You know, if Facebook was fined $50 billion instead of five, I guarantee you we wouldn’t be having this conversation right now. It would have led to not only an incredible change within the company, but it would have been the signal to the entire industry. And there would have been innovation that would have been sponsored to come out of this problem. Like, we can use technology to fix this, as well. We just have to create the right incentivization plan. I have belief that the engineers of the future that are around can help us get out of this. But currently they are not the — they are not the decision makers, because these companies are not democratic whatsoever.

AMY GOODMAN: Emma Briant?

EMMA BRIANT: Could I? Yes, please. I just wanted to make a point about how important this is for ordinary Americans to understand the significance for their own lives, as well, because I think some people hear this, and they think, “Oh, tech, this is maybe quite abstract,” or, you know, they may feel that other issues are more important when it comes to election time. But I want to make the point that, actually, you know what? This subject is about all of those other issues.

This is about inequality and it being enabled. If you care about, you know, having a proper debate about all of the issues that are relevant to America right now, so, you know, do you care about the — you know, the horrifying state of American prison system, what’s being done to migrants right now, if you care about a minimum wage, if you care about the healthcare system, you care about the poverty, the homelessness on the streets, you care about American prosperity, you care about the environment and making sure that your country doesn’t turn into the environmental disaster that Australia is experiencing right now, then you have to care about this topic, because we can’t have an adequate debate, we cannot, like, know that we have a fair election system, until we understand that we are actually having a discussion, from American to American, from, you know, country to country, that isn’t being dominated by rich oil industries or defense industries and brutal leaders and so on.

So, I think that the issue is that Americans need to understand that this is an underlying issue that is stopping them being able to have the kinds of policies that would create for them a better society. It’s stopping their own ability to make change happen in the ways that they want it to happen.

AMY GOODMAN: Emma Briant —

EMMA BRIANT: It’s not an abstract issue. Thank you.

AMY GOODMAN: What would be the most effective form of regulation? I mean, we saw old Standard Oil broken up, these monopolies broken up.

EMMA BRIANT: Yeah.

AMY GOODMAN: Do you think that’s the starting point for companies like Facebook, like Google and others?

EMMA BRIANT: I think that’s a big part of it. I do think that Elizabeth Warren’s recommendations when it comes to that and antitrust and so on are really important. And we have legal precedents to follow on that kind of thing.

But I also think that we need an independent regulator for the tech industry and also a separate one for the influence industry. So, America has some regulation when it comes to lobbying. In the U.K., we have none. And quite often, you know, American companies will partner with a British company in order to be able to get around doing things, for instance. We have to make sure that different countries’ jurisdictions cannot be, you know, abused in order to make something happen that would be forbidden in another country.

We need to make sure that we’re also tackling how money is being channeled into these campaigns, because, actually, there’s an awful lot we could do that isn’t just about censoring or taking down content, but that actually is, you know, about making sure that the money isn’t being funneled in to the — to fund these actual campaigns. If we knew who was behind them, if we were able to show which companies were working on them and what other interests they might have, then I think this would really open up the system to better journalism, to better — you know, more accountability.

And the issue isn’t just about what’s happening on the platforms, although that is a big part of it. We have to think about, you know, the whole infrastructure. We also need more accountability when it comes to our governments. So, this is the third part of what I would consider the required regulatory framework. You have to address the tech companies and platforms. You also have to address the influence industry companies.

And you also need to talk about defense contracting, which has insufficient accountability at the moment. There is not enough reporting. There is not enough — when I say “reporting,” I mean the companies are not required to give enough information about what their conflicts of interest might be, about where they’re getting their money and what else they’re doing. And we shouldn’t be able to have companies that are working in elections at the same time as doing this kind of national security work. It’s so risky. I personally would outlaw it. But at the very least, it needs to be controlled more effectively, you know, because I think a lot of the time that the people who are looking at those contracts, when they’re deciding whether to give, you know, Erik Prince or somebody else a defense contract, are not always knowledgeable about the individuals concerned or their wider networks. And that is really disturbing. If we’re going to be spending the amount of money that we do on defense contracting, we can at least make sure it’s accountable.

KARIM AMER: When you look at the film, there’s a clip that I think ties exactly to what Emma’s talking about, where in the secret camera footage of — Mark Turnbull is being filmed, and he’s bragging to a client and saying, you know, about the “Crooked Hillary” campaign, “We had the ability to release this information without any trackability, without anyone knowing where it came from, and we put it into the bloodstream of the internet.” Right? That is, I hope, something that we would say only happened in 2016 and will not be allowed to happen in

2020.

AMY GOODMAN: What would stop it? And let’s go —

KARIM AMER: Well, political action, because what — the problem with that is that what you’re saying there is, essentially, is you can go out there and say what you want, not as an individual — free speech is an individual who says no — as an organized, concerted, profiting entity with special interests, without anyone knowing what you’re saying. And I would say that the first step to accountability is to say, “If you want to say something in the political arena, you have to – we have to know who’s behind what’s being said.” And I guarantee you, if you just put at least that level of accountability, a lot of people will be — a lot less hate would be spewed that would be funded for, because it would connect back to who’s behind it.

AMY GOODMAN: Let’s go to that video clip. This was a turning point for Cambridge Analytica.

KARIM AMER: Yes.

AMY GOODMAN: It was not only Turnbull, it was Alexander Nix —

KARIM AMER: Yes.

AMY GOODMAN: — who was selling the company and talking about what they could do.

ALEXANDER NIX: Deep digging is interesting, but, you know, equally effective can be just to go and speak to the incumbent and to offer them a deal that’s too good to be true and make sure that that’s video recorded. You know, these sorts of tactics are very effective, instantly having video evidence of corruption —

REPORTER: Right.

ALEXANDER NIX: — putting it on the internet, these sorts of things.

REPORTER: And the operative you will use for this is who?

ALEXANDER NIX: Well, someone known to us.

REPORTER: OK, so it is somebody. You won’t use a Sri Lankan person, no, because then this issue will —

ALEXANDER NIX: No, no. We’ll have a wealthy developer come in, somebody posing as a wealthy developer.

MARK TURNBULL: I’m a master of disguise.

ALEXANDER NIX: Yes. They will offer a large amount of money to the candidate, to finance his campaign in exchange for land, for instance. We’ll have the whole thing recorded on cameras. We’ll blank out the face of our guy and then post it on the internet.

REPORTER: So, on Facebook or YouTube or something like this.

ALEXANDER NIX: We’ll send some girls around to the candidate’s house. We have lots of history of things.

AMY GOODMAN: So, that was Alexander Nix, the former head of the former company Cambridge Analytica. Brittany Kaiser, if you can talk about what happened when that video was released? I mean, he was immediately suspended.

BRITTANY KAISER: He was immediately suspended. And Julian Wheatland, the former COO/CFO, because he actually straddled both jobs, was then appointed temporary CEO before the company closed. What —

AMY GOODMAN: Is it totally dead now?

BRITTANY KAISER: I wouldn’t say so. Just because it’s not the SCL Group or Cambridge Analytica or Emerdata, which was the holding company they set up at the end so that they could bring in large-scale investors from all around the world to scale up — just because it’s not under those names doesn’t mean that the same work isn’t being done by the same people. Former Cambridge Analytica employees are currently supporting Trump 2020. They are working in countries all around the world with individual political consultancies, marketing consultancies, strategic communications firms.

And it’s not just the people that worked for Cambridge Analytica now. As I mentioned earlier, because 2016 was so successful, there are now hundreds of these companies all around the world. There was an Oxford University report that came out a couple months ago that showed the proliferation of propaganda service companies, that are even worse than what Cambridge Analytica did, because they employ bot farms. And they use trolls on the internet in order to increase hatred and division.