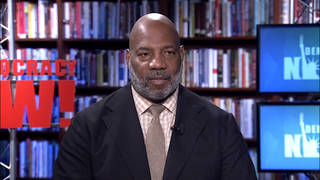

Guests

- Stephen Goosedirector of Human Rights Watch’s Arms Division and co-founder of the Campaign to Stop Killer Robots.

Human rights activists and dozens of countries are calling for an all-out ban on the use of lethal autonomous weapons, also known as “killer robots,” that can make the final order to kill without a human overseeing the process. The robots will be coming under review next week during high-level talks on the Convention on Certain Conventional Weapons. So far, the Biden administration has rejected calls to ban the weapons, instead proposing the establishment of a “code of conduct” for their use. “This is not just a new weapon, it’s a new form of warfare,” says Steve Goose, director of Human Rights Watch’s Arms Division and co-founder of the Campaign to Stop Killer Robots. “The majority of countries want to see a legally binding instrument — a new treaty — that would have prohibitions and regulations on fully autonomous weapons.”

Transcript

AMY GOODMAN: This is Democracy Now!, democracynow.org. We end today’s show looking at how human rights activists in some countries are calling for an all-out ban on the use of lethal autonomous weapons, known as “killer robots,” that can make the final order to kill without a human overseeing the process. They are coming under review during high-level talks on the Convention on Certain Conventional Weapons next week.

The Washington Post reports at least 30 countries have called for a ban on killer robots. Last Tuesday, New Zealand said it would join the international coalition demanding a ban, declaring, quote, “the prospect of a future where the decision to take a human life is delegated to machines is abhorrent.”

But so far, the Biden administration has rejected calls to ban the use of killer robots. During a U.N. meeting in Geneva Thursday, the U.S. instead proposed establishing a “code of conduct” for their use.

For more, we’re joined by Steve Goose, director of Human Rights Watch’s Arms Division and co-founder of the Campaign to Stop Killer Robots.

Steve, thanks so much for joining us again. First explain what killer robots are, and then talk about the U.S. role in fighting the ban.

STEPHEN GOOSE: Killer robots are a thing of the future, but they’re not in the distant future. These are weapons that take the human out of the loop. That is, it is not the human who decides what to target and when to pull the trigger, but instead the weapon system itself does this through artificial intelligence and sensors and algorithms. This is not just a new weapon, it’s a new form of warfare and not one that’s going to be nice to humankind.

AMY GOODMAN: So, explain who’s developing them, who’s using them and what the U.S. position is versus places like New Zealand and other countries.

STEPHEN GOOSE: There are a handful of countries that are very vigorously pursuing research and development programs aimed at the acquisition of killer robots or fully autonomous weapons. The U.S. is perhaps at the top of the list. Others would be Russia, Israel, South Korea, India. Those are the problem countries, but there are others. Most any advanced military is developing weapons that have ever greater amounts of autonomy in the systems.

The question is: How far do you take that? Do you take it all the way 'til you remove the human from the loop altogether, or do you draw a line where there's still meaningful human control? For the U.S., their position is essentially one of wanting to safeguard their ability to produce these weapons, so they’re rejecting any notion of a treaty that would have prohibitions or restrictions on the development and acquisition of fully autonomous weapons, and instead are proposing measures that would allow the acquisition of the weapons but have some regulations about how they’re used.

AMY GOODMAN: So, earlier this year, The New York Times published a piece on the use of an autonomous weapon to kill an Iranian nuclear scientist. Can you talk about that?

STEPHEN GOOSE: There’s a lot of misunderstanding about what a killer robot might consist of and whether or not they exist today. Systems with a great deal of autonomy already exist. Indeed, drones have a great deal of autonomy. You were just talking about drones a moment ago. But systems that remove the human from the loop altogether are what we’re trying to oppose, and those do not yet exist.

AMY GOODMAN: And can you talk about the Geneva meeting that’s taking place on certain conventional weapons? Explain its significance and Human Rights Watch’s recent report, “Crunch Time on Killer Robots.” Existing international law is not adequate to address the urgent threats posed by such weapons that some countries are developing.

STEPHEN GOOSE: There are diplomatic talks that are going on this week, last week and this week, that are aimed at developing options for future work on what they like to call lethal autonomous weapon systems, or LAWS, a very unfortunate acronym for this issue. And these are preparing for a five-year review conference of this CCW, the Convention on Conventional Weapons, which will take place next week. And at this five-year review conference, they’re supposed to look at what they’ve done over the past five years and plan for the next five years.

And it’s a decision that will have to be made about what to do on killer robots, and they’re looking at the various options that are out there. The majority of countries want to see a legally binding instrument — a new treaty — that would have prohibitions and regulations on fully autonomous weapons. Others are proposing a nonbinding political declaration. Others are proposing a code of conduct. The problem with a code of conduct is that it presupposes that indeed you will pursue these weapons, they will come into being, and it will be just a matter of how well you can regulate them. We think that is an approach that will result in these weapons proliferating around the world and having the destabilizing effect that is all too easy to imagine.

AMY GOODMAN: Well, I want to thank you so much for being with us, Steve Goose, director of Human Rights Watch’s Arms Division and co-founder of the Campaign to Stop Killer [Robots].

That does it for our show. We hope everyone will join us on Tuesday night, December 7th, at 8 p.m. Eastern Time, when we celebrate at democracynow.org our 25th anniversary. We’ll be joined by the birthday boy Noam Chomsky himself. That’s right, December 7th will be his 93rd birthday. We’ll also be joined by Angela Davis, Arundhati Roy, the National Book Award-winning poet Martín Espada, Winona LaDuke, Danny DeVito, Danny Glover and more. You can go to democracynow.org for details. That’s December 7th at 8 p.m. Eastern. I’m Amy Goodman. Stay safe. Wearing a mask is an act of love.

Media Options