We look at two cases before the Supreme Court that could reshape the future of the internet. Both cases focus on Section 230 of the Communications Decency Act of 1996, which backers say has helped foster free speech online by allowing companies to host content without direct legal liability for what users post. Critics say it has allowed tech platforms to avoid accountability for spreading harmful material. On Tuesday, the justices heard arguments in Gonzalez v. Google, brought by the family of Nohemi Gonzalez, who was killed in the 2015 Paris terror attack. Her family sued Google claiming the company had illegally promoted videos by the Islamic State, which carried out the Paris attack. On Wednesday, justices heard arguments in the case of Twitter v. Taamneh, brought by the family of Nawras Alassaf, who was killed along with 38 others in a 2017 terrorist attack on a nightclub in Turkey. We speak with Aaron Mackey, senior staff attorney with the Electronic Frontier Foundation, who says Section 230 “powers the underlying architecture” of the internet.

Transcript

AMY GOODMAN: We begin today’s show looking at two Supreme Court cases that could reshape the future of the internet. Both cases center on Section 230 of the Communications Decency Act of 1996, which has protected internet platforms from being sued over content posted on their sites by outside parties. Backers of Section 230 say the law has helped foster free speech online. The Electronic Frontier Foundation has described Section 230 as, quote, “one of the most valuable tools for protecting freedom of expression and innovation on the internet.”

Well, on Tuesday, justices heard arguments in Gonzalez v. Google. The case was brought by the family of Nohemi Gonzalez, who was killed in the Paris 2015 terror attack in France. Her family sued Google, claiming the company had illegally promoted Islamic State propaganda videos. Then, on Wednesday, justices heard arguments in the case of Twitter v. Taamneh. This case was brought by the family of Nawras Alassaf, who was killed along with 38 others in a 2017 ISIS attack on a nightclub in Turkey. During oral arguments in the Twitter case, Justice Elena Kagan made this comment, which was met with laughter.

JUSTICE ELENA KAGAN: I mean, we’re a court. We really don’t know about these things. You know, these are not like the nine greatest experts on the internet.

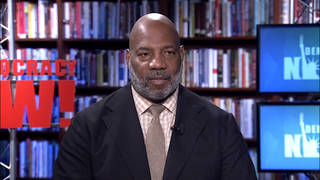

AMY GOODMAN: For more, we’re joined by Aaron Mackey. He is senior staff attorney with the Electronic Frontier Foundation, which submitted amicus briefs for both cases.

Aaron, welcome to Democracy Now! It’s great to have you with us. Can you talk about the significance of the Supreme Court and the internet last week, the idea that based on their decision, it could change the internet as we know it for all time?

AARON MACKEY: Yes. Good morning.

The two cases that were heard were the first time that the Supreme Court has actually ever come across and potentially interpreted Section 230. And what Section 230 is — why Section 230 is so important is because its legal protections for online intermediaries power sort of the underlying architecture that we all use every day. So, when internet users use email, when they set up their own websites, when they use social media or create their own blogs or comment on each other’s blogs, all of that is powered and protected by Section 230.

And so, EFF’s concern in these two cases is that the Supreme Court might interpret Section 230 narrowly so that internet users will not have those similar opportunities in the future to organize online, to speak online, to find their communities online, because the law might be narrowed, and internet services might react in a way that limits opportunities for people to both speak online, but also limits the types of forums and the type of speech that we can have online.

AMY GOODMAN: Aaron, explain what happened with Nohemi Gonzalez in 2015 and what this case is based on.

AARON MACKEY: Yeah. So, the central allegations in the complaint are not that YouTube played any role in the attacks that resulted in Nohemi Gonzalez’s death, but it’s that YouTube provided a number of features and services to either members of ISIS or ISIS supporters that allowed them to recruit, engage or sort of help or assist ISIS in sort of its larger organizational and terrorist goals. And so, based on that, they filed a claim, a civil claim, under the Anti-Terrorism Act for aiding and abetting ISIS.

And so, the courts have been basically interpreting Section 230 uniformly to say, fundamentally, those claims are based on the content of users’ speech, so posts on YouTube and, in Taamneh, posts on Twitter, and so, therefore, the courts have held that Section 230 applies and sort of bars those claims. And so, that is the sort of underlying claim. And so, really, I think what the Supreme Court — what you heard last week was them struggling with: Where do you draw the line to sort of impose liability on YouTube or Twitter or any sort of online service, when these claims are sort of very attenuated from the harm that has occurred in these cases?

And our concern is that if you put the sort of liability on those platforms for such sort of attenuated roles in the claims here, you’re really going to deter them from hosting any speech that even remotely deals with this. And this will likely fall on a number of organizations and individuals. It will fall on reporters. It will fall on people who are trying to seek access and document atrocities across the globe. And so, that’s what we’re concerned about.

AMY GOODMAN: So, when the Supreme Court justices were speaking, they used hypotheticals like pager companies, public payphones to try to deliberate on these cases. Can you talk about their ability to understand and regulate the internet?

AARON MACKEY: Yeah. I mean, I think what they were struggling with there was trying to really sort of find an analogy that works in terms of the relationship that an online service has with, say, millions of — hundreds of millions of users — right? — in the case of Twitter and billions of users in the case of YouTube. And so, they were using analogies like cellphone companies or beeper companies to try to sort of get at, you know, what liability should exist or should have existed for those types of companies, when they’re providing, like, communication services generally open to anyone, and then someone takes that service, uses that service in a way that is harmful or could be civil — you know, could create culpability either under criminal law or civil liability. And so, what you do to sort of deter — or, what should the law do to sort of deter the service from extending their services to those types of individuals? And I think what they struggled with is, you know: Where do we place that liability without actually undermining sort of the entire purpose of Section 230 or, generally, the people’s — these platforms’ First Amendment rights and users’ First Amendment rights to sort of make these platforms and use these platforms to speak online?

AMY GOODMAN: And so, what direction do you think the justices are going in, based on their questioning? And how concerned are you?

AARON MACKEY: I think we continue to be concerned, because this case, in Gonzalez, in particular, with Section 230, the plaintiffs in the case have proposed a test that says if any platform is recommending what they call sort of promoting user-generated content, that promotion or recommendation would fall outside of Section 230. And so, the potential danger there is that platforms organize all of our content in a variety of ways, whether it’s organizing it by letting users vote things up and down, as in the case of Reddit, or in allowing sort of recommended videos of “We saw you watch this on YouTube. We think you might like this next.” And so, the danger there is that the — you know, are sort of twofold. The first is that the platform is not going to host certain types of information because it’s not going to want to be accused later of recommending that content. And then, the other danger is that the platforms are not going to organize content in ways that we’ve grown accustomed to. And what the plaintiffs have proposed is to basically make all these services like a search engine, where we, as the users, have to go on in and find things. And I think that makes it very difficult for us to easily access material we want, but I think it also makes it really difficult for individual creators and speakers to find the audiences that they desire.

AMY GOODMAN: Aaron, before you go, I wanted to ask you about a lawsuit against San Bernardino Superior Court seeking transparency of search warrants used by law enforcement to gain data from cellphones in criminal investigations. This involves cell-site simulators. Can you explain what’s at stake? And so often, especially in the case of reproductive rights, we see that one case in one local area can determine law ultimately in the United States.

AARON MACKEY: Yeah. So, EFF had filed a petition to unseal a number of these search warrants in San Bernardino County because news reports had shown that law enforcement in San Bernardino County were filing more search warrants and were potentially using cell-site simulators at a higher rate than any county in the entire state. And so, a cell-site simulator operates by mimicking a cellphone tower and pretends to be one, and so that everyone in an area near a cell-site simulator, their phone connects to it. And they sort of vary in their functionality, but, generally, our concerns are that everyone’s privacy —

AMY GOODMAN: They’re like StingRay devices.

AARON MACKEY: Yeah, that was a brand name created by the Harris Corp., but there are a number of them. But we were concerned that everyone’s sort of private information is sort of captured by these devices, and they create these sort of broad searches. So, as you said, you could deploy one near a sensitive site like a health facility, and you could have the potential to collect a variety of information about people who are totally unassociated with any sort of criminal activity or the particular investigation that the police are seeking authorization to deploy it. And so, our concerns with the deployment of these types of surveillance tools is that they’re generally done in a broad fashion in ways that I think raise potential Fourth Amendment concerns for everyone who happens to be in the vicinity and is subject to the search but is not sort of under any criminal suspicion.

AMY GOODMAN: And has the information gained from people who are just randomly picked up, swept up in the search been used in court against them?

AARON MACKEY: Yeah, I think that is a big question that we don’t know. We know in a variety of other contexts that it is, that law enforcement often engages in what’s known as parallel construction, where they obtain material in a variety of ways that might violate the Fourth Amendment, but then sort of paper over it by using sort of other documents and claiming about how they got this information. So, that is the concern, that once police have this information, they may misuse it and go after people for a variety of benign behavior or even protected First Amendment activity.

AMY GOODMAN: Well, Aaron Mackey, we want to thank you so much for being with us, senior staff attorney with the Electronic Frontier Foundation. But we’re staying with the internet and its origins. We’re going to talk to Malcolm Harris, author of the new book, Palo Alto: A History of California, Capitalism, and the World. Then we’ll talk about the ERA. Stay with us.

Media Options