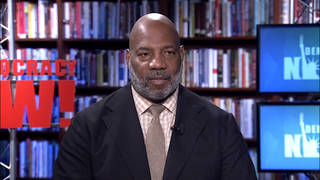

Guests

- Roger McNameeauthor of Zucked: Waking Up to the Facebook Catastrophe. He had a 34-year career in Silicon Valley and was an early investor in Facebook.

Facebook CEO Mark Zuckerberg testifies on Capitol Hill Wednesday, where he is expected to face questioning about the company’s cryptocurrency Libra, among other issues. Zuckerberg has faced scrutiny before, including for Facebook’s role in the 2016 U.S. presidential election. A former mentor of Zuckerberg and longtime Silicon Valley investor, Roger McNamee, speaks out about the company’s dismissal of Russian interference in the election. “They treated it like a PR problem, not a business issue,” McNamee says.

More from this Interview

- Part 1: Zucked: Early Facebook Investor Roger McNamee on How the Company Became a Threat to Democracy

- Part 2: Mark Zuckerberg’s Former Mentor: I Tried to Raise Alarm over Russian Interference But Was Ignored

- Part 3: Big Tech Platforms Have Had a “Profound Negative Effect on Democracy.” Is It Time to Break Them Up?

- Part 4: Early Facebook Investor: We Need to Hold Big Tech Accountable for Creating “Toxic Digital Spills”

Transcript

AMY GOODMAN: This is Democracy Now!, democracynow.org, The War and Peace Report. I’m Amy Goodman, as we continue with Roger McNamee, author of Zucked: Waking Up to the Facebook Catastrophe. He has had a 34-year career in Silicon Valley, was an early investor in Facebook. So, talk about how you met Mark Zuckerberg, and talk about the evolution of the company.

ROGER McNAMEE: So, Amy, when I met Mark, it was in 2006. I had already spent 24 years, nearly half of my life, investing in Silicon Valley. And it was a normal thing for me to be consulted by young entrepreneurs who just wanted some perspective on how Silicon Valley worked. I got an email from one of Mark’s colleagues saying, “My boss has a crisis. Would you be willing to take a meeting with him?”

I was really excited about the idea, because while I could not use Facebook — at that time, you had to be a student with an email address from your school — I was convinced Facebook was the next big thing. I was convinced that the requirement that you had to be who you said you were, with authenticated identity from your school email address, and the ability to control who could see your private information — I thought those two things changed everything and would allow Facebook to eventually be bigger than Google was at that time. So I was really excited to meet Mark.

And he comes into my office. And I tell this story in the book; I’ll give you the very short version of it. I said, “Mark, before you say anything, I’ve got to tell you why I took this meeting.” Because, I said, “I think you’ve got the most important thing since Google. But before long, either Microsoft or Yahoo is going to offer a billion dollars for Facebook, and everybody you know is going to tell you to take the money. And I’m here to tell you that if you want to see your vision come to fruition, you have to do it yourself.”

What followed that, Amy, was the most painful five minutes of my entire life. Mark, one of his great strengths is he does not act without thinking things through very carefully. And in this particular context, he decided to think about what I said before he reacted. He wanted to decide if he trusted me. But it took five minutes. And you know from television how painful silence can be when you’re expecting a response from someone.

Long story short, it turned out that Yahoo had offered a billion dollars, and, as I had predicted, everyone told Mark to sell the company. And that was the reason he was there to see me. He wanted to find out how he could keep the company independent. I helped him solve that problem. And for three years thereafter, I was one of his mentors. And my role was very narrow but important to both of us, because everybody in the company had wanted to sell out, and so he needed to rebuild his management team.

And the most important person that I recommended to him and helped to bring into the company was Sheryl Sandberg. And I had known Sheryl for a number of years because she had introduced me to Bono, the U2 lead singer, who was my business partner. He became my business partner after Sheryl introduced him. And so I knew Sheryl really, really well, and I had helped her get into Google, and, you know, we were very close. And I thought she’d be a really good fit for Mark. I thought she would — the two of them would balance each other and provide complementary skills. And for many years, that was true.

AMY GOODMAN: So, talk about when you started to get disillusioned.

ROGER McNAMEE: So, imagine, it took place in two phases, Amy. The first part of it was I got disillusioned with Silicon Valley. Beginning around 2010, with the financing of Spotify, and then going on to Airbnb, Uber, Lyft and then Juul, I started to see companies that were clearly the best of what Silicon Valley had to offer but whose essential being violated my values, that Airbnb and Uber and Lyft were really about breaking the law, that they basically said, “The law doesn’t apply to us.” And Spotify was about essentially profiting at the expense of musicians. And Juul, of course, was about addicting young people to nicotine. And I just said, “You know, I can’t keep investing other people’s money if I’m not willing to invest in the best stuff coming out of my community.” So I decided it was time for me to retire. And when I made the decision in 2012, my fund still had a few years to run, so I had to wait ’til it ended. It ended at the end of 2015.

And then, immediately, January of 2016, in the context of the Democratic primary in New Hampshire, I saw hate speech being circulated from Facebook groups that were notionally associated with the Bernie Sanders campaign. And it was obvious that Bernie had nothing to do with it, but the thing that was also obvious was that the hate speech was spreading so virally that someone had to be spending money to get my friends into these groups. And that really disturbed me. Then I saw civil rights violations, relative to Black Lives Matter, taking place, using the advertising tools of Facebook. But the clincher for me came in June of 2016 with the Brexit referendum in the United Kingdom. That was the first time that I realized, “Oh my god. The same advertising tools that make Facebook so useful for a marketer can be used to undermine democracy in an election.” That was when I started looking for allies, and I couldn’t find any.

And eventually, I was given an opportunity to write an opinion piece for the Recode tech blog to raise my concerns. And before I got finished with it, in early October, the U.S. government announced the Russians were interfering in the election. And at that point I scrambled to finish the draft, and I sent it to Mark Zuckerberg and Sheryl Sandberg. I mean, they were my friends. I had been their mentor. And I was really worried that something about the culture, the business model and the algorithms of Facebook was allowing bad actors to harm innocent people. And I couldn’t believe that they would do — that Facebook would do this on purpose. And so I went to warn them, nine days before the presidential election.

And, unfortunately, they treated it like a PR problem, not like a business issue. And they were nice enough in the sense that they had one of their colleagues work with me for three months, you know, to let me try to lay out my case. So, between basically the end of October of 2016 and February of 2017, I was begging Facebook to do what Johnson & Johnson did after the Tylenol poisoning in Chicago in 1982. The CEO dropped everything. He withdrew every bottle of Tylenol from every retail shelf until they invented tamperproof packaging. And I thought Facebook should do something like that, should work with the government to find out everything the Russians were doing, to find out every other way the platform was being abused either to violate civil rights or to undermine democracy.

But for whatever reason, they just — maybe I was the wrong messenger, maybe something about the way I delivered the message didn’t work, but they didn’t take it seriously. And I realized I was faced with a choice. I was so proud of that company, and I had been so closely involved, but I felt like I knew something I had to share with everybody, that I couldn’t just sit back and be retired and let it go by. And so I decided to become an activist, which is something I never anticipated doing. And I’ve spent the last — essentially, the last three years, every day, doing nothing but trying to make the world aware of the dark side of internet platforms like Facebook, like Google, and to help people both protect themselves but to protect their children, to protect democracy and to protect the economy.

AMY GOODMAN: Let me go to Facebook CEO Mark Zuckerberg speaking during a press call last year during which he dismissed claims Facebook ignored Russia’s election meddling or undermined investigations.

MARK ZUCKERBERG: I’ve said many times before that we were too slow to spot Russian interference, too slow to understand it and too slow to get on top of it. And we’ve certainly stumbled along the way. But to suggest that we weren’t interested in knowing the truth or that we wanted to hide what we knew or that we tried to prevent investigations is simply untrue.

AMY GOODMAN: OK, your response to that?

ROGER McNAMEE: So, I think in Mark’s mind, he is doing the right thing. But here’s where the problem comes in, Amy. Mark believes that the mission of connecting the whole world on one seamless, frictionless platform is the most important thing anybody can do on Earth, and it justifies any means necessary to get there. I’m sure in his own mind they felt like they’ve been open with people. But the reality is quite different. Facebook is designed to be opaque. Google is designed to be opaque. And in a sense, they work together, because Google manages all of the infrastructure behind digital advertising, and so they play a role in that whole ecosystem that allows companies like Facebook to prevent anyone from inspecting what’s going on.

And the truth is, they had to know before the election that there was something really wrong, because I’m sure I was not the only one who brought it to their attention. But at the end of the day, their view of the world is that they should just connect everybody and not be responsible for the consequences. What they told me was they said, “Roger, the law says we’re a platform, not a media company. We’re not responsible for what third parties do.” And I said, “Mark, Sheryl, this is a trust business. The law doesn’t protect you if your users believe that you’re undermining democracy, if they believe that you’re harming civil rights, if you’re harming public health, if you’re harming privacy. There’s no protection against that.”

And that’s what we’re seeing today. And the issue that I run into is that in 2016 I’m willing to accept that maybe they didn’t pick up the right signals. God knows I didn’t. But once they were informed, once people like me, once President Obama went to Mark, there was no excuse. And they have clearly spent the entire time since then trying to use misdirection to keep us from seeing what really happened there, denying, deflecting and delaying any kind of investigation. If they were really sincere about this, they would have shown us every ad the Trump campaign showed on the platform. They would have shown everything that the Russians did. They would have given us all the billing records. They would have done all of that kind of stuff. But they quite clearly haven’t done that.

And it goes way beyond just elections, right? You have terrorism in Christchurch, New Zealand. You have ethnic cleansing in the Asian country of Myanmar. You have hate speech around the world. You have mass killings in the United States. I mean, all of these things were enabled by internet platforms like Facebook. And they didn’t go out of their way to cause them to happen, but they never put in place any safety nets, any firewalls, anything to protect users from the bad actors who are empowered by the way the algorithms and the culture and the business model of Facebook work.

AMY GOODMAN: Roger McNamee, I want to turn to Mark Zuckerberg speaking at Georgetown University just last week, where he invoked Frederick Douglass, Martin Luther King Jr., Black Lives Matter movement to argue that attempts to reduce false information on Facebook could censor free speech.

MARK ZUCKERBERG: In times of social tension, our impulse is often to pull back on free expression, because we want the progress that comes from free expression, but we don’t want the tension. We saw this when Martin Luther King Jr. wrote his famous “Letter from a Birmingham Jail,” where he was unconstitutionally jailed for protesting peacefully. And we saw this in the effort to shut down campus protests during the Vietnam War. We saw this way back when America was deeply polarized about its role in World War I and the Supreme Court ruled at the time that the socialist leader Eugene Debs could be imprisoned for making an antiwar speech. In the end, all of these decisions were wrong. Pulling back on free expression wasn’t the answer. And, in fact, it often ends up hurting the minority views that we seek to protect.

AMY GOODMAN: So that’s Mark Zuckerberg. Civil rights leaders quickly criticized his speech. Martin Luther King Jr.'s daughter Bernice King tweeted, quote, “I heard #MarkZuckerber's [sic] 'free expression' speech, in which he referenced my father. I’d like to help Facebook better understand the challenges #MLK faced from disinformation campaigns launched by politicians. These campaigns created an atmosphere for his assassination,” she said. King is reportedly visiting the Facebook headquarters this week. Your response, Roger?

ROGER McNAMEE: I’m with Bernice. And I’ve got to tell you, I think what Mark said there was horrific. I mean, it was disingenuous. It was not an honest telling either of what Facebook does or of how history worked. Here’s the issue: Mark wants us to treat Facebook with respect on First Amendment grounds, that this is an issue of free speech. And here’s where the problem comes up. The question that we’re talking about here is whether Facebook should be allowed to have falsehoods in political advertising. They had a rule for the longest time that they couldn’t have false advertising anywhere. When they were called out on this issue recently, instead of fixing the problem, they changed the rule to say, “From now on, we’re not going to have fact-checking of political ads.”

So the question is: Does that constitute free speech? Is that about free expression? And I would argue that’s not anything at all about that. And the reason is because Facebook ads are microtargeted. The only people who see them are people who are carefully selected. So, in an issue of free speech, if everyone got to see every single ad in the political context, then Mark’s statement might have little bit of viability. But they don’t. This is all about organized campaigns designed to manipulate vulnerable populations.

And the second problem we have here is that Mark’s business, based on advertising, requires him to amplify the most engaging content that people see. And the problem is that the most engaging content on any internet platform is the stuff that triggers flight or fight, our most basic human instinct. Well, in that context, we’re really talking about hate speech, disinformation and conspiracy theories. And there is no way in God’s green Earth you can convince me that free expression is aided by the amplification of hate speech, disinformation and conspiracy theories over facts or over any kind of neutral conversation. I just think everything Mark’s — everything he’s saying is completely self-serving, it’s not intellectually honest, and none of us should take it seriously.

AMY GOODMAN: On Capitol Hill last year, Cambridge Analytica whistleblower Chris Wylie told the Senate Judiciary Committee that the voter profiling company sought to suppress turnout among African-American voters and preyed on racial biases. Wylie testified that Cambridge Analytica harvested the data of up to 87 million Facebook users without their permission and used the data to, quote, “fight a culture war.” Cambridge Analytica was founded by billionaire Robert Mercer. Trump’s former adviser Steve Bannon of Breitbart News was one of the company’s key strategists. Wylie said he left the company due to efforts to disengage voters and target African Americans. This is Chris Wylie.

CHRISTOPHER WYLIE: Cambridge Analytica sought to identify mental vulnerabilities in voters and worked to exploit them by targeting information designed to activate some of the worst characteristics in people, such as neuroticism, paranoia and racial biases. To be clear, the work of Cambridge Analytica is not equivalent to traditional marketing. Cambridge Analytica specialized in disinformation, spreading rumors, kompromat and propaganda.

AMY GOODMAN: So, that’s Cambridge Analytica whistleblower Chris Wylie testifying before the Senate Judiciary Committee. Roger McNamee, if you can talk about the whole explosive — the whole exposé of Cambridge Analytica and its relationship with Facebook, and how Zuckerberg dealt with it?

ROGER McNAMEE: So, the thing to understand, Amy, is that Cambridge Analytica was in the political psychological operations business. They were willing to sell their services to anyone, and they sold them in many countries around the world. They essentially had some kind of relationship to the Leave.EU campaign for Brexit in the United Kingdom, and they had a relationship with the Trump campaign — originally with the Ted Cruz campaign, but then the Trump campaign.

And their goal was very simple, was to identify groups whose votes could be suppressed. And in the context of the Trump campaign, that was suburban white women, people of color and idealistic young people. And essentially, the goal was not to convert them from being Clinton voters to being Trump voters, but in fact to find Clinton voters who could be, with a targeted — microtargeted, focused campaign, discouraged from voting at all. And it was demonstrably successful.

And the thing is, there is no law against it today. The way things are set up, we never anticipated the ability to have the level of addiction that a smartphone would create, and therefore the ease of emotional manipulation that that would allow in a political context. Microtargeting, as practiced by Facebook, Instagram, Google, YouTube, in the context of a political campaign, is asymmetrical in the most extreme form, because an ethical campaign will not use that kind of technology; only an unethical campaign will.

And so, at the end of the day, we’re left with a situation where it empowers people who have bad intentions at the expense of democracy itself. And we need to have laws that reflect the impact of technology in our democracy, and that is one of the highest priorities, in my mind, that Congress should have, both as an election issue in 2020 and then for the period immediately following.

AMY GOODMAN: As Mark Zuckerberg goes to Capitol Hill today to testify, we’re speaking to Roger McNamee, author of Zucked: Waking Up to the Facebook Catastrophe. He was an early investor in Facebook, knew Mark Zuckerberg very well. When we come back, I want to ask you about the presidential candidates and their position on the issues of trust and antitrust and Facebook, what should happen to it and other companies. Stay with us.

Media Options