Topics

Guests

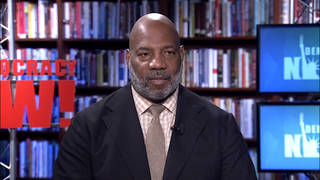

- Jameel Jafferfounding director of the Knight First Amendment Institute at Columbia University.

Social media giant Facebook has announced it has suspended former President Donald Trump’s account until at least 2023. He was initially suspended from the platform for comments to supporters who stormed the U.S. Capitol on January 6 and is permanently banned on Twitter. Facebook’s move could have implications for other world leaders who use Facebook, like Brazilian President Jair Bolsonaro and Indian Prime Minister Narendra Modi. Jameel Jaffer, director of the Knight First Amendment Institute, said he is not sure the decision struck the right balance for protecting free speech but is defensible because Facebook “has a responsibility to ensure that the people using its platform aren’t using it to undermine democracy or incite violence.” But he argues the much bigger issue is Facebook’s engineering and design decisions, such as ranking algorithms, which really determine which speech gets heard or marginalized.

More from this Interview

Transcript

AMY GOODMAN: Let me ask you about Facebook. The social media giant has announced it’s suspended former President Donald Trump’s account until at least 2023. He was initially suspended from the platform for comments to supporters who stormed the U.S. Capitol January 6th. Trump slammed Facebook’s decision during his first public speech after being president, Saturday, at the North Carolina Republican Party convention. This is what he said.

DONALD TRUMP: They may allow me back in two years. I’m not — I’m not too interested in that. They may allow me back in two years. We’ve got to stop that. We can’t let it happen. So unfair. They’re shutting down an entire group of people, not just me. They’re shutting down the voice of a tremendously powerful — in my opinion, a much more powerful and a much larger group.

AMY GOODMAN: Interestingly, President Trump recently shut down his own blog after less than a month, reportedly because he felt the low readership made him look small and irrelevant. He’s permanently banned on Twitter. The Facebook suspension of Trump could have implications for other world leaders who use Facebook, like the Brazilian President Jair Bolsonaro, the Indian Prime Minister Narendra Modi. The significance of this?

JAMEEL JAFFER: Yeah, I mean, I don’t — I find it difficult to shed tears for Trump here. I do think that it’s a good thing if the social media companies, especially the big ones, like Facebook, have a heavy presumption in favor of leaving speech up, especially political speech and especially the speech of political leaders. That’s not because I think political leaders have the right to be on social media, but because the public needs access to their speech in order to evaluate their decisions and hold them accountable.

Now, that said, there are limits. And I think Facebook also has a responsibility to ensure that the people who are using its platform aren’t using it to undermine democracy or incite violence. And I think that Facebook’s decision here is — you know, with respect to Trump, is a defensible one. I’m not sure that it’s struck exactly the right balance. I think reasonable people can disagree about that. But I think that its decision is defensible.

I think that the bigger issue here, though — and it’s a little bit frustrating that, you know, everybody is so — it’s predictable and also frustrating that everybody is so focused on the ruling with respect to Trump. The much bigger issue here isn’t sort of content moderation decisions, you know, questions about which accounts stay up or which accounts stay down or which content stays up and which content stays down. The much bigger issue here has to do with Facebook’s own engineering and design decisions, because those decisions are really the decisions that determine which speech gets traction in public discourse and on the platform, which voices get heard, which voices get amplified, which voices get marginalized. That’s not about content moderation. That’s a result of Facebook’s engineering decisions, you know, its ranking algorithms, its policies with respect to political advertising. All of those things are much, much more important than who’s on the platform and who’s off. And I think that’s where the public’s attention should be, on Facebook’s design and engineering decisions.

You know, Facebook sometimes says, “We don’t want to be the arbiters of truth. Nobody wants us to be the arbiters of truth.” That’s true. I don’t want Facebook to be the arbiter of truth. But I do want Facebook to take responsibility for its engineering and design decisions. I want it to take responsibility for the way those decisions shape and often distort public discourse. And I don’t see the company doing that right now.

To the contrary, if you look at the response that they filed last week when they announced the deplatforming of Trump, or the continued deplatforming of Trump — you know, many, many groups, including the Knight Institute, had called on Facebook to commission an independent study of the ways in which its design decisions might have contributed to the events of January 6th. And Facebook not only rejected that proposal, but there’s a pretty remarkable paragraph in its 20-page statement, in which it says the responsibility for the January 6th events lies entirely with the people who engaged in those acts — essentially saying Facebook doesn’t bear responsibility here.

And obviously the people who were in Washington on January 6th and were breaking into the Capitol bear responsibility for their acts, but Facebook, too, bears responsibility for its engineering and design decisions that resulted in misinformation being spread so freely on the platform, people being shunted into echo chambers. That is the result, again, of Facebook’s own decisions. And it’s really disturbing that Facebook doesn’t seem even to acknowledge it.

AMY GOODMAN: What about the fact that you’re talking about these mega-multinational corporations, for example, like Facebook? They’re the ones who are determining who should have the right to free speech. I mean, it’s Mark Zuckerberg —

JAMEEL JAFFER: Yeah.

AMY GOODMAN: — who’s appointing this committee, that then makes the recommendations, essentially under his control.

JAMEEL JAFFER: Yeah. I mean, I actually am not unsympathetic to the arguments that some conservatives are making that the social media companies — I say “the social media companies”; I’m thinking mainly of Facebook and Google, but the big companies — have too much power over public discourse. Now, what the right answer to that, I think, is — you know, “What’s the right answer to that?” is a difficult question. I don’t think it would be better if the government made these decisions rather than Facebook.

But there are other ways to tackle monopoly power. You know, antitrust action is one possibility. There are also regulations that could require the companies to be more transparent than they are right now. I’ll give you just one example here. Political ads on Facebook are pretty opaque. You can target a political ad to a very narrow community on Facebook. And some of those political ads include misinformation. And if you target a narrow community on Facebook with that kind of misinformation, it’s very difficult for others to determine which community has been targeted in that way and to respond to the speech or correct the speech. And that has implications for public discourse. And I think that Congress could require Facebook to be more transparent about political advertising. And that’s just one way in which, you know, at least at the margin, we could limit the power that these companies have over public discourse that is closely connected to the health of our democracy.

AMY GOODMAN: And finally, Jameel, we just have about 30 seconds, but Senator Maria Cantwell, head of Commerce Committee, proposing $2.3 billion in grants and tax credits to sustain local newspapers and broadcasters as part of the infrastructure act, the idea that newspaper after newspaper is going over, local journalism is so deeply threatened, hedge funds are taking over the newspapers that have survived. What do you make of this?

JAMEEL JAFFER: Yeah, so, I don’t know the details of that bill, but, in principle, I think it’s a good idea. I do think that journalism should be seen as infrastructural, in a way. You know, I do think that certain kinds of journalism are a public good, which means that it’s going to be undersupplied by the market. You should have asked Joe Stiglitz about this. But, you know, as a result, government has a role to play in ensuring that that good is supplied.

Now, that said, it’s important that the government’s role here be carefully limited, because we don’t want to create a situation where the government is picking and choosing which journalism gets supported. So the structure here is really, really important. But again, I haven’t seen the details of that bill. I do think that, in principle, it’s a good idea.

AMY GOODMAN: Jameel Jaffer, I want to thank you for being with us, director of the Knight First Amendment Institute at Columbia University, previously the ACLU’s deputy legal director.

Next up, we look at Israel’s ongoing crackdown on Palestinians. On Sunday, once again, Israeli forces detained Mohammed El-Kurd, this time along with his twin sister Muna, two of the most prominent activists fighting eviction from their homes in Sheikh Jarrah. Stay with us.

Media Options